FlameRat

Members-

Posts

23 -

Joined

-

Last visited

-

Software version: Affinity Publisher 2.5.0 (Microsoft Store) Platform: Windows 11 Description: I'm making a document in Affinity Publisher and need to use an old vector file I made in Affinity Designer V1. I dragged the afdesign file onto the canvas, being prompted to link the file because it's too big, which I agreed, and selected the artboard that contains the version of the vector I want, and till this point things work correctly. However, after closing the software and reopening next day, the software frozed in a state that I can pan around the canvas but can't make any edit, and the page that contains the vector doesn't load at all. I updated all 3 softwares to 2.5.2 and save in Affinity Designer to convert the afdesign file to V2, and the issue is solved. But I'm not sure whether it's 2.5.2 or file version conversion that solved the issue. Additional information: all the files are stored in the OneDrive syncing folder, however I did open the afdesign file first so that it's downloaded to local storage.

-

CM0 reacted to a post in a topic:

GLSL shader as live filter

CM0 reacted to a post in a topic:

GLSL shader as live filter

-

Years later and this is still not available... I would want DXF export because laser engravers won't take PDF (obviously), also sometimes there is a need to export to 3D CAD software for extruding accurate shapes, and SVG is basically useless in both case because it can't be exported in millemeter unit (so it loses its purpose since the size won't be precise anymore).

- 404 replies

-

Deburger reacted to a post in a topic:

Square color wheel

Deburger reacted to a post in a topic:

Square color wheel

-

Deburger reacted to a post in a topic:

Square color wheel

Deburger reacted to a post in a topic:

Square color wheel

-

polite reacted to a post in a topic:

GLSL shader as live filter

polite reacted to a post in a topic:

GLSL shader as live filter

-

Giving that they are still developing the enterprise version, my guess is, they would just keep the current "pro" version as-is, maybe with some minor gimmicks, and shift the focus to intergrate the software into their product design lines. It would be a very bad news for artists who aren't product designers. I was never very into the software except on mobile platform (which I have no choice, I'm an android user) and now probably use even less of it. I'm mainly a PaintToolSAI2 user, Affinity Designer got me into doing vectors but Sketchbook pretty much got me into nothing (well, maybe except their pencil textures are a bit better than other affordable software). Still waiting for CSP EX to have discount in China (EULA says Chinese user can't use any version outside China, and I don't want to pirate it), and Animation Paper which would has absolutely no ETA for open beta.

-

Recently I've been dealing with a lot of 32 bit renders. I've started using ambient occlusion layer to add more depth, but I find the result image a bit too intense if I just multiply the AO layer to the RGB layer. Therefore, besides lowering the opacity, I also tries to apply a curve or level adjustment to the AO layer. However, upon adding the layer, the whole image would dim down a lot, even without me changing any settings. It could potentially be a bug, but I think it would also make sense if it's by design (having pre-process enabled by default so that the adjustment would feel more "linear" to human eye). I can still get things done after some trying, but that the adjustment's behavior kinda feels "random" bugs me a bit. I want to know how exactly does all the adjustment layers works in 32 bit environment so that I can be more confident when using them.

-

Scanner support has been asked for a few people already, but I'd like to make it more clear why it make sense to use AP rather than just import scan from other softwares. There are two things that would not quite be provided with an external scanning software: 16 bit and color management By far I've not quite find any software out there that supports 16 bit scanning (maybe Photoshop can, but I'm not using that for obvious reasons), which kinda makes archiving slightly more "destructive", since later color management might create more banding. For driver that has color management, this might not be that much of an issue, but for people who need to scan film negatives (though I'm not one of those, at least not yet), no 16 bit might mean more color artifact than it should be. And for color management, well, it's kinda easier to explain. If scanning feature is introduced one day, it should scan to the develop persona.

-

Have a button in the "layer" menu that converts a layer with alpha channel into a solid layer that has a mask containing the alpha info.

-

For example, say I want to create a mask that is for gradient blend a layer to below, I would have to "rasterize as mask". It might work just fine if I don't want to edit it further, but it's not that uncommon that this isn't the case, and sometimes I might want even more complex gradient mask (for example, maybe I created a mask for reflection that has binary combined vectors painted in gradient), in order to use it as a gradient mask rather than a clip mask, I would have to rasterize it, which is irreversable and not that "edit-friendly". Well, the idea is simple. Maybe I should be able to click on the mask icon and switch the mask type (between clip and gradient).

-

shojtsy reacted to a post in a topic:

GLSL shader as live filter

shojtsy reacted to a post in a topic:

GLSL shader as live filter

-

Just come to my mind. Might be a little crazy though, but it might not be that hard to implement, and there's absolutely a lot of usage if implemented. The idea is simple, allowing user to write their own live filter in GLSL. Something like this: //haven't coded GLSL some time so this is more of a pseudo code unity texture2D source; //the source image unity vec2 sourceSize; //the rastered size of the source image in px unity struct{ //user-defineable sliders, document driven /** * @name Slider A * @description The Slider A * @minValue 0 * @maxValue 100 */ float sliderA; /** * @name Slider A * @description The Slider A * @minValue 0 * @maxValue 100 */ float sliderB; } settings; in vec2 pos; //the normalized position/coordination of the current fragment, aka pixel out vec4 color; //the result this filter should produce //the above part should be auto-generated, with the settings being generated using a GUI interface, even though user can type those in themselves //below is a dummy filter that simply outputs whatever the source image is main(){ vec4 currentColor = texture(source,pos); return currentColor; } Which would already be GPU-friendly (since it's basically a fragment shader) and thus GPU acceleration can be automatically used. Also, by default this is 32-bit image editing ready. I don't see many people would want to write their own filters, but those who want to could benefit from this, and other people can use their code without knowing how to write one, which means that Affinity Photo can have potentially unlimited amount of live filters. There could be more features, for example side-chaining other images as secondary sources, but that would only be in the roadmap if this one is in first, thus not in the topic of this suggestion.

-

The feature of these two personas are actually pretty similar, just that the former one is for raw photo while the latter one is for 32bit HDR. (At least that's what I'm assuming that they are for). Not gonna make the full comparison, but at least my common sense would be, OpenEXR is the "raw" format for 3D rendering, and I would use Affinity Photo to "develop" it into the final result. It does contain the accurate color value anyway. Therefore, it feels weird that when I use the Develop persona, it would treat the pic as a badly-developed photo rather than a RAW image. Therefore, I'd say that maybe the two personas could just be one instead. This might cut down some confusion when dealing with OpenEXR files, and might help camera RAW photo processing a bit as well: everything is converted losslessly into 32-bit, and then the whole workflow can become 32-bit without any additional cost (except performance and memory), which could make live filters producing better result. (Giving that live filters work in 32-bit internally).

-

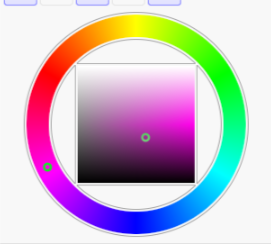

Again, if I could, I would not want to choose between having a circle hue picker and a square S-L picker. Currently you can only have one, but not both, and that's why I post the request in the first place.

-

Aside from an accurate 180 degree or 120 degree usually won't look that pleasing, it's still inefficient to type in the digits when you can just click on the desired point on the wheel. That field is usually only good for copying values in and out, or if there's no color picker available (say if you are quick-fixing a website's color palette).

-

It's more that the linearity and consistancy of the picker that matters. And thus, the waste of resolution is really just a minor thing.

-

@Sima Actually I would prefer the one I show, because it makes most sense to me. (The one in CSP you showed is the HSV/HSB picker, which would only make sense if you only do subtractive blending and pretty much no additive blending). The rotation of the triangle is certainly something hard to get used to, but the weird thing about triangle is, in order to desaturate the color for the same amount, you'll have to drag the pointer for different distance if the color doesn't stay the same luminance. So does adjusting the luminance when the saturation doesn't stay the same. (Otherwise the diamond shape picker in Autodesk Sketchbook would be even more suitable. CC: @walt.farrell) The reason I stopped using HSB/HSV is that, it's actually weird to adjust saturation and luminance with it, when doing arts that are using additive blending (aka thinking how the scene would be illuminated rather than as if the scene is being painted on an actual piece of paper). The brightness below mid-point is from bottom to top, but then in order to achieve more brightness, you would have to move the pointer from right to left. And to adjust saturation, you would have to adjust the pointer at a tilted angle, with the angle not being constant. For the hue circle, one more advantage would be, it's more natural to use if the art piece uses a lot of purple shades, since there's no seam at the purple hue. And yes, these are all just personal preferences, but still matters, as it does affect productivity a bit.

-

That option would have the hue picker as a straight line rather than a circle. If I have to choose between having a circle hue picker and a square saturation-lumination picker, I would choose the circle hue picker (Otherwise it would be a lot harder to pick contrast colors). But the thing is, why should I have to choose between these two?

-

Something like this: It's not a big deal, but to me, this is a bit more handy to use than the triangle one. If this thing could be an option, it would be a great addition to those who prefer it over other color picking methods.