Search the Community

Showing results for 'benchmark'.

-

Hello, it looks like there is no topic for new version of benchmark. Here is basic iPad 8th gen. I am just curios, does anybody have newest MacBook Air 15 (M3) results ?

-

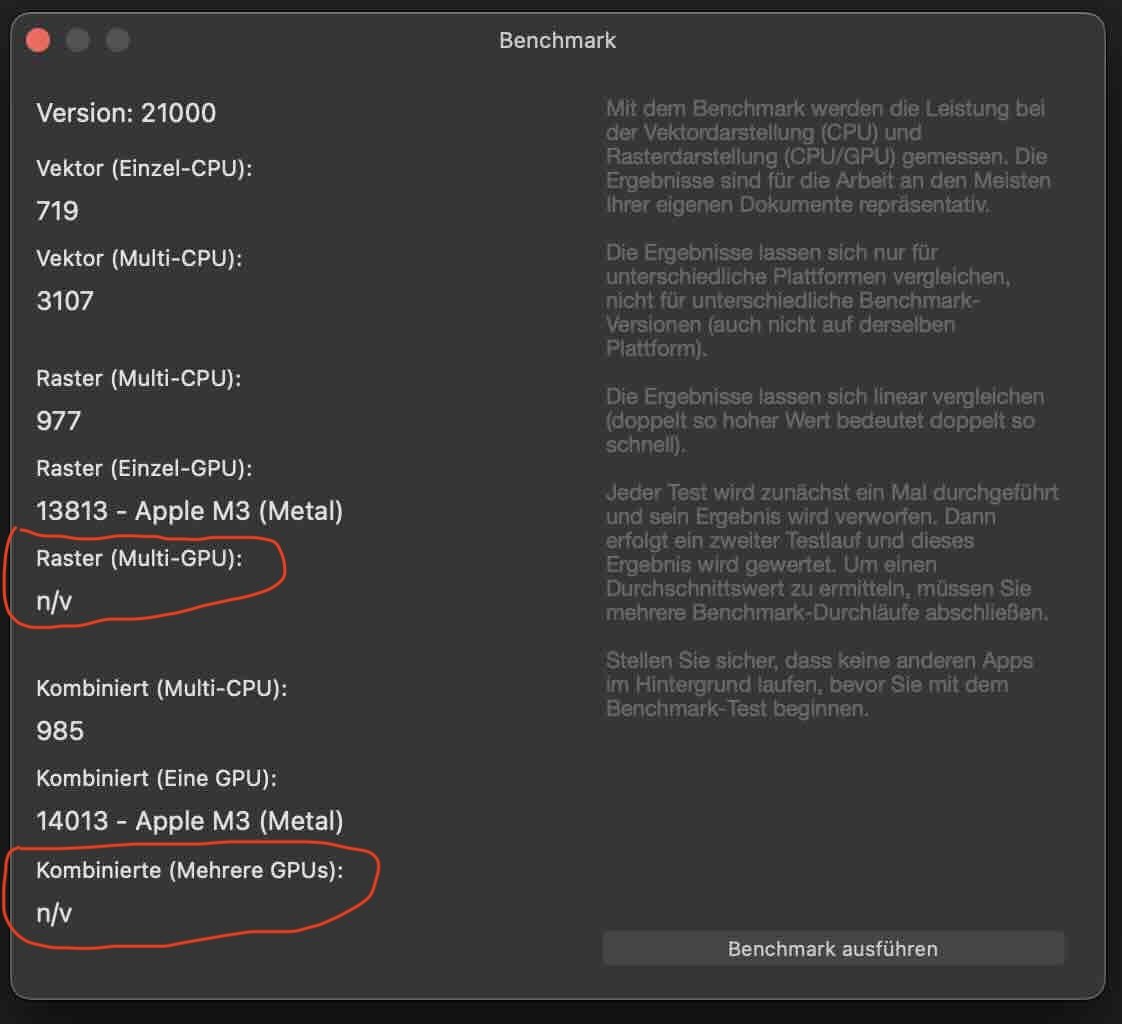

@Udo B. I can't help with your issue but could you do us a favour? Some of the forum users would like to know how Affinity Photo performs on the new MacBook Air M3. Could you please run the benchmark test and then share a screenshot of your results here? Start Affinity Photo Choose Help > Benchmark Click Run Benchmark. Do nothing else until it is finished - the Run Benchmark button will appear again when it is finished. Take a screenshot. Thank you for your help.

-

Hello, thanks for the suggestions! but there were no changes both during Mac Save Mode and after normal boot! The performance remains poor. I bought Affinity Photo in the Apple App Store. Does that possibly play a role? (allegedly, for example, the version from the App Store takes longer to start - on my newly rebooted system about 22 sec). I uploaded my .aphoto file using the Dropbox link. It is far from optimally structure and you can certainly do many things better and differently. But as I said, everything run absolutely smoothly under Sonoma 14.4.0. Furthermore, I carried out the benchmark requested by MikeTO and attached corresponding screenshots. One Benchmark ran alone, the other one with CPU/GPU history for more informations. During both tests, the computer was connected to the power supply. The power save mode was off. A screenshot of my affinity photo performance settings is also included Thank you for your support! Best regards

-

Hey there! First of all thanks @WMax70 for the settings, I've just tested them, and besides the auto to Gen4, the other two were already disabled in my case. There is no improvement on any benchmark in my case with these new settings. Regarding my score, I should also mention at this time I'm using the MSIX version of Affinity which has much worse benchmark scores than the MSI installer version. This was documented in other threads where I've been active, if you are interested in that, just look at my other posts/replies. Current standing theory is that MSIX is at least 20-25% slower in the benchmark. The only reason I am using MSIX is that the Affinity Photo startup lags a bit LESS on MSIX version. MSI version pretty much hangs very hard when you run the program, mostly due to the dumb way it reserves Video Memory from the OS - something I've mentioned before in my posts, but was obviously disregarded by the developers, but it's also easy to test using an advanced Process Lasso or other process management software. I'm glad the software works good for the Apple users at least, but in the X86 world the OpenCL is the biggest joke possible in terms of performance. Affinity can't even use 10% of my GPU power because all it does is rasterize and use 1 render thread and 1 process thread. There is some rudimentary multithreading on the CPU side I think, but the GPU is dormant. That's right boys and girls, that's what Canva bought in 2024, aren't they in for a treat

-

Thanks for running the benchmark. I've added your system to the table over here which shows that the M3 has as very high single CPU core performance. This won't be terribly useful for you but could help somebody with an M1 trying to decide whether to upgrade to an M3 - even if they don't know what each metric impacts, they can get an idea of how much of an increase they'd see across the board. sc

-

new desktop

Granddaddy replied to pioneer's topic in Affinity on Desktop Questions (macOS and Windows)

What's your limit on cost? I consider 1 TB of storage to be the minimum that anyone should have on any kind of computer. But 1 TB of storage would be totally inadequate for my own use. My last two desktops have had both a 1 TB SSD plus a 2 TB HDD. Also a dedicated graphics card and 16 GB of RAM are minimal. But it all comes down to cost. In the past decade I have kept my purchases between $1600 and $1800 (U. S. dollars). The IBM PCjr I bought nearly 40 years ago in the mid-1980s cost me more than $2500, back when $2500 was a considerable sum of money. Today's computers are bargain-basement cheap in my mind. It all depends on how much you are willing and able to spend and how much pain you are willing to endure if you choose to run a barely adequate machine that might be suitable for no more than e-mail, a little word processing, and simple web browsing. If you want to do graphics editing and run multiple higher function applications, then treat yourself to a fully functional machine that will be useful for the next decade to run whatever software you may get involved with in the future. My wife is still using the computer I bought for about $1400 to do photo and video editing in 2013. When deciding how much to spend on an item, I sometimes convert its cost to pizza equivalents. How many pizzas could I buy for that price? Smart-phone-equivalents are another useful benchmark. How many iPhones could one buy for the cost of the computer one is considering? How much more useful is that computer than a cell phone? -

Old thread, but in case you still have the problem. Do you have Open CL hardware acceleration selected.... It makes a great difference having it disabled. Even with a 3060, if I leave it on, it will lag. Also, it works better for me to NOT use 'Windows Ink' setting in preferences/tools, but instead, "high precision", and also disable in your Wacom (or other brand) panel (outside Affinity, on Windows) , in the profile you created there for Affinity (whichever the app, this works the same for the 3) , specifically in the "proyection" tab (in Wacom's), where it might be checked "Windows Ink". The brush typically goes more fluid for me by doing so, and more importantly, the software is more stable and with no glitches, once I disable that. YMMV, as this issue that's not happen in every system, but it's a good measure to try. The Open CL acceleration is IMO better turned off in most cases. Fast note: I was able to get fluid painting with just a dirty cheap nvidia 1650. the key is that AMD GPUs don't play well with Affinity, and is not Affinity's fault, but bugs on the AMD's drivers, as has been discovered. My advice is to use though a 3060. They are kind of cheap now, and in my 3900X (becoming old, now.. single CPU scores are much higher with just a Ryzen 5600) with a nvidia 3060 provides me with absolutely fluid painting, even with large and complex (oil, etc) brushes in Affinity Photo. Some settings in the brush can slow it down (this is not unique of Affinity, but very noticeable) a lot. Like... careful with the"spacing" value. The bigger the value, the faster. But careful not to be it so much that you start seeing the dotted line or patter repeating strokes. Certain dynamics in the brush tab for that might affect, too. Playing with that is how you can achieve ultra fast brush painting, provided you first took care of what I mentioned above. I paint with no lag in Adobe RGB color space (wider gamut), 16bits/channel (as gives better gradients, better transitions, but it is taxing for a mid range machine like a Surface Pro really is -compromise for being portable and everything-). Many apps are using GPU these days for the brush. So many... Photoshop, since a while, is almost useless without a 2GB VRAM video card and with certain benchmark, below that you are painting with lag. PaintTool Sai (extreme performance with large canvases and large brushes, best in that) and ClipStudio use the CPU for almost everything. That's the difference you notice, as the Surface Pro doesn't have a real GPU. Corel Painter and PaintStorm Studio use very heavily the GPU. Corel Painter even makes you run a benchmark just first time you run the program, to let you know if you are going to able to paint or not, depending on your hardware... But I think you can try all that and get fluid painting in The 2.x Affinity apps.

-

Hi, Google Slides has a few very useful features which Affinity is missing. if you coudl impliment some or all it would be great: 1) The ability to scroll text size changes with different sizes of text selected in the same text box. I frequently use different sizes of Text (and different fonts) in the same paragraph. Being able to vary it all by just scrolling when adjusting design layout is extremly useful and fast vs having to manually select groups of equally sized letters and adjust independently. 2) Impliment a proper format painter that can be used to target selcted sections of text: You can currently do the copy + ctrl alt v to paste some characteristics but that affects the whole text in the target box not just a selected area (eg trying to have bolded first words in a paragraph but not the rest of text: if you copy any characteristics they apply to all text not just selected text). 3) Callouts: currently you can't extend the "arrow / pointer" part of the callout beyond a virtual box, which is very limiting. Normally you can pull teh tip of the pinter past the limits of the text box part of a callout (see Google Slides for ideal implimentation). 4) Guides that span multiple pages / artboards. Snapping currently (sometimes annoyingly) spans multiple artboards. It would be useful if guidelines set up to help alignment also spanned multiple aligned boards. 5) Going just beyond matching Google Sheets features: Layer Based Effects (obvs) currently only apply to whole text objects not to selected sections of text text - so if I want outlined first words, I need to chop out the first words of a paragraph, make space, then insert a new text frame in the space using the effects I want whilst maintaining spacing manually. it'd be niceif they didn't but I get that's major surgery. And obviously if you can sort out a vectoriser I'll happlily pay an upgrade fee.

-

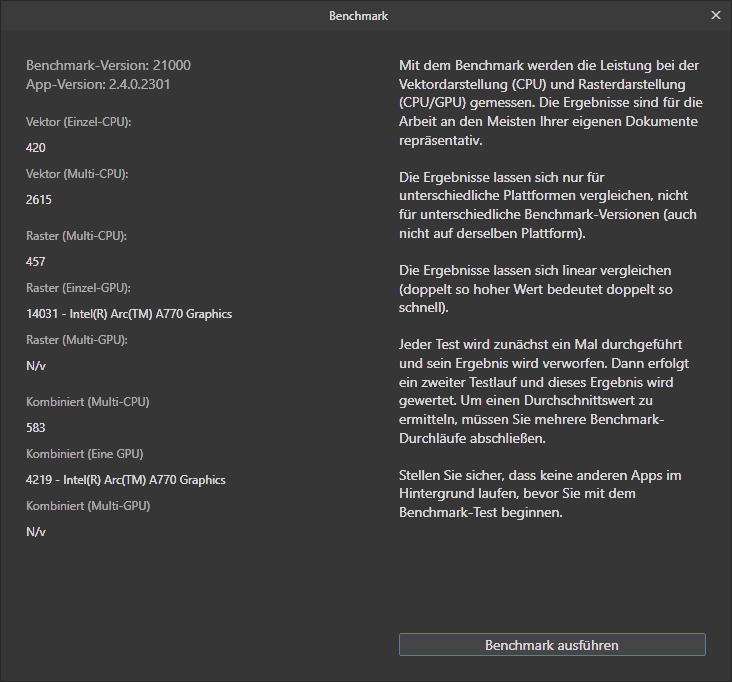

Here another Benchmark for the comparision list. AMD Ryzen 7 5700x (8 cores) Intel Arc A770 16 GB LE Windows 11

-

@thegary and @slizgi Could you please tell me which CPU you used for the benchmarks you posted in this thread? I'd like to add them to the benchmark table we're compiling over here. Thank you.

-

I can't help with the issue, but what CPU was this test run on? We're compiling a table of benchmarks and it would be nice to add your benchmark report. Thank you.

-

I really do no understand why affinity does not allow to automatically upload the benchmark results to a central repository, e.g. json format and webservice. Using screenshots is so 1990. Users already have option to authenticate towards Affinity if security is a concern. If you don’t provide upload, then allow copy of json formatted results. Maybe add, some basic PC parameters like cpu, gpu, memory, driver etc should be included from the diagnostics function.

-

I made a table of benchmarks many versions ago. If enough people can share benchmarks here I'll put them together into a table again. Please include details on the CPU, GPU, and Mac/Win/iPad. If it's a Apple M1/2/3 please include the number of CPU and GPU cores to make comparisons easier. Here's my benchmark for a MacBook Pro with M1 Pro (10 core CPU, 14 core GPU).

-

I have been looking at that arrangement. Possibilities are AMD Ryzen 9 7950X or 7900X with a RTX 3060 12 GB or an RTX 4060Ti 16GB. Or I could just go with an Intel Core i9 14900K with either the 3060 or 4060 gpu. I just fancied trying an all AMD build for once. I have had replies to the same question posted on Affinity Facebook, copied below, Hi, I have Version 1 and Version 2 (latest release versions) of Affinity Suite of apps. I now need to update my Windows 10 desktop pc's to machines that will run Windows 11. Projected build specs are AMD based using ASRock motherboards with either AMD Ryzen 9 7900X or Ryzen 9 7950X cpu. Can you please tell me if the AMD graphic card incompatibilities that have been discussed for the last couple of years have been FULLY resolved yet. If not can you please identify which graphic cards, either AMD or Nvidia are FULLY compatible and FULLY functional with Affinity programs? Thanks, Bryan Affinity Hello! V2 had updates to the app's memory handling with hardware acceleration enabled which significantlyh improved performance on the AMD RX series cards, so provided that the GPU meets the Direct3D level 12.0 requirement (which most modern GPUS will) for Hardware acceleration it will be fully supported. I've linked to the thread below where this is discussed for more info. https://forum.affinity.serif.com/index.php?/topic/136068-amd-radeon-rx-hardware-acceleration/&do=findComment&comment=1066614 Thank you for your response. I have followed the link provided and read the posts. Although there seems to be some improvements in benchmarks test results it seems that there were still some problems at the time. The last post to that thread was in May 2023. Are you telling me that there have been no further improvements, corrections or developments between May 2023 and February 2024 to fully correct this situation? Also what has been the response to date from AMD regarding this matter? Thanks again for your input. Affinity This update was to address the significant performance issues with the RX series cards, and as shown in the follow up posts resulted in drastically improved benchmark scores and therefore performance. Outside of this thread i'm not aware of another where issues have been specifically raised against performance on AMD. OpenCL support is likely to continue being improved as further software updates are released.

-

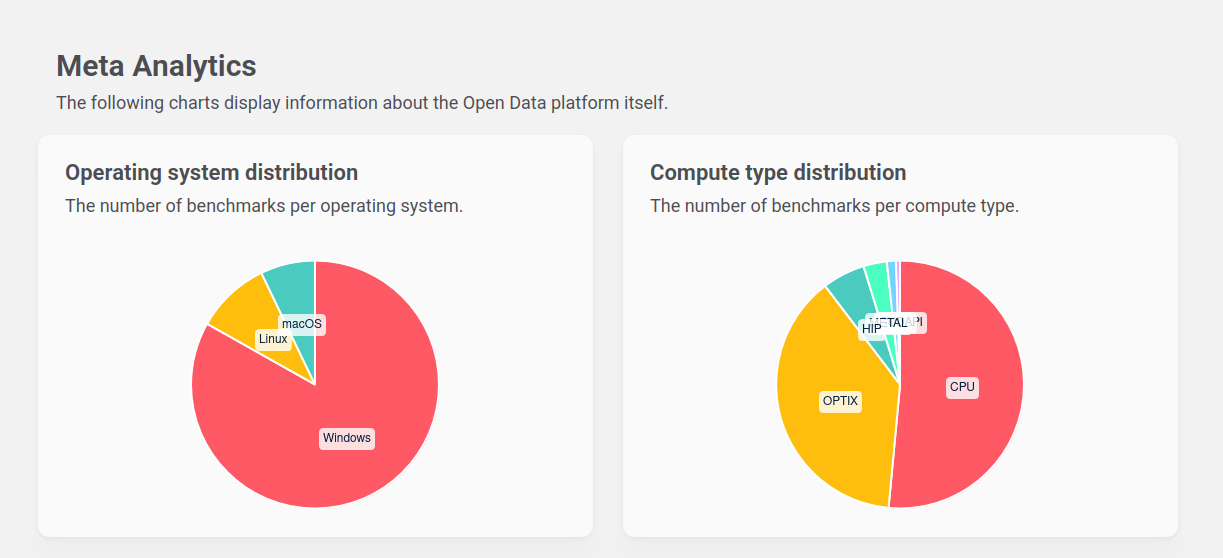

It's important to note, my primary motivation to post on this topic is to help other people who are looking for ways to use Linux as their main daily driver and are having difficultly building a complete pipeline for image creation. I'm not trying to convert people who are happy using other systems. I don't see it as a religion, just an option, and we are lucky to have these kinds of options at zero cost of entry. The only cost is the time to learn something different to Windows or MacOs. I am also not offering any advice or insight into Linux vulnerabilities, especially for critical systems. I'm not sure how that is relevant for people who want to edit photos with Affinity Photo on a desktop PC, I would assume any OS not designed for critical systems would be quite vulnerable to targeted attack. Your primary motivation doesn't come across like it's driven by any a concern for peoples data security or to be helpful in general, you seem to be putting most of your energy into shouting down anyone who wants to use Linux as a desktop environment using your experience in critical systems as the main crux for that argument. The fact remains, if Linux is such a non-viable option as a desktop environment. Why has it persisted for all these years? and people are actually using it as a desktop environment and developing compatible multi platform software. It would be safe to say uptake of Linux has increased in recent years, you'd expect something that has little chance of being a major desktop environment would see dwindling numbers, not increasing numbers. My primary industry is CGI and VFX. I use Blender for many things and it is safe to say it is a popular Linux application. If you look at the benchmark data for their pathtracer, you can see benchmark results categorized by OS. Here you can see Linux actually has more submissions than Mac OS. Whilst this is not definitive and is possible some are render nodes, it does appear that more than 4% of Blender users are Linux based. Blender also works on all Linux Distros using a single deployment. The Blender Foundation also produce films with it using their in house team who all use Linux. https://opendata.blender.org/ You also have to look at the gaming scene. Linux has seen a huge uptake since Valve included proton compatibility layer implementation into Steam, bare in mind, Gabe Newell (owner of Valve) is ex Microsoft staff (13 years & oversaw the first 3 releases of windows). They also use Ubuntu as a base for the Steamdeck portable gaming device. Unreal Engine, directly supports Linux and is using a multi distro deployment. It is totally viable to develop realtime 3D content and video games using Linux with nothing but UE5. You also have Autodesk Maya, Nuke, Flame and even Adobe Substance Painter/Designer. Some major film post production studios use RHEL Linux to do VFX for feature film. When you couple all of this with excellent Windows emulation of many games and software via Wine/Lutris. I would say it is indeed an incredibly viable option for creative professionals. I haven't booted into Windows for over 18 months. Linux even reserves less of your vRam for the OS which directly improves rendering performance. If you are correct with what you say about Linux not being ready, then you are presenting at the very least a highly subjective opinion and at worst a polarizing slightly out of touch opinion, if you look at who is using it in creative industries as an example. I think the take home point here, is, can Linux be a viable desktop environment? yes absolutely (I am), but it is relative. You can make an argument from which ever lens you choose to view it from. And no one opinion is better than another. It's just what works for you and what does not. I'm not saying everyone can use Linux as a desktop environment but you appear to be suggesting that no one should bother trying based on your perspective. Which IMO is not constructive.

-

Hey folks, first I want to thank Serif for the great v2 upgrade - for me this is a real game changer (especially the publisher IPad App and the new Ipad optimized UI - I total love it and it changes my workflow completely in a very positive way. And to optimize it further yesterday the delivery guy brings me a nice new tool for my work. I want to share the benchmark results here if anyone else is considering to get a new IPad. Now I am using a 12,9“ M2 Ipad 16GB Ram (it is my companion of a 15“ 2016mbp which is signifikant slower… in the affinity benchmarks) I hope this is good Place for the results because @MikeTO wishes a new thread (he is the guy who brought all the results together in the past) and I think the beta photo forum part is not the right place for such a threat - If anybody else has a good Idea maybe the mods could consider to change the place. And if you have other results to share with us this could be a very useful thread to get an overview wich System runs how well with the affinity suite. Here is my result:

-

thanks for your input. i never place vector graphics in the document because this always causes issues, i always convert it to affinity file format and copy the Layers/elements into the target document so they are a "native" element in the document. i only strictly work in one single cmyk color profile (eci300%, because its required for the printers). in those documents that i run into issues i dont use any raster layers nor vector incompatible content, all same color space and color profile. everything is affinity created vectors and the line of text that errored out last time was a simple white text with a 0.3pt 100k stroke applied with the last letter not getting its stroke on export all of a sudden. I didnt do anything except resizing the stroke a few times and it came back in the export. which clearly hints towards a bug or application issue. once fixed, i cannot replicate it anymore. before "refreshing" the stroke, the issue would be on All exported PDFs unless its a fully rasterized one. I tried all export profiles and export settings, only fully rasterized works, any vector based output is borked. until, like i said, i resize the stroke, and then it exports fine, at least visually. it exported fine before and i didnt touch that line of text and apparently working on other areas on the document triggered something that breaks it. Update: so I decided to try installing the regular .msi version and wiping the appdata of the msix version of the affinity suite and while I still have issues with the print upload with this particular printer (I am in contact with their technical support to nail down the issue, will report back) I noticed a jump in performance. the msi version seems to be quite a bit faster than the msix version and the scores in the affinity photo benchmark are also higher. so i got that going for me, which is nice. right now i cannot say anything about corruption issues because i just installed it. i dont have big hopes, but its faster, so maybe its also having less trouble with pdf exports lol

-

Any updates about this improvement? I wonder if this also improves V1 performance. I have benchmark version 11021, and planning to upgrade my old nvidia GPU, trying to decide between nvidia vs amd. Is there anybody who could run it with AMD RX 5-7xxx series card? This is what I get with GTX970 and v11021 benchmark (Affinity Photo 1.10.6.1665): Raster (single gpu) 3623 Combined (single gpu) 4573

-

For continued sharing of 11021 (Photo 1.10.4) results. Please include details on your CPU since that's not detailed in the benchmark dialog. Thanks.

-

Ok. So I retested the AMD 5600XT. I updated my drivers for the NVIDIA 3080 and retested again on new benchmark. I did 3x for averaged results and highlighted the top score for Single GPU. Both are tested on the current official beta (2.0.3.1670 Benchmark Version 20300). Here's the results. Hardware: AMD 5600XT 6G Results Variance: 5% ~ 6% Sandboxed (2.0.3.1670 Beta Benchmark Version 20300) Unofficial Unsandboxed (2.0.3.1670 Beta Benchmark Version 20300) NVIDIA RTX3080 12GB Results Variance: 18% ~ 20% Sandboxed (2.0.3.1670 Beta Benchmark Version 20300) Unofficial Unsandboxed (2.0.3.1670 Beta Benchmark Version 20300) Summary: Similar drop in performance being sanded versus unsanded on the 20300 benchmark as the 20000 benchmark in the NVIDIA 3080. So to add to weirdness: On the latest driver for the Nvidia (Nov 13 -> Dec 5), my OpenCL performance went up 8.7% (184566 vs 210300) according to geekbench. That could've explained the variance between @rvst's 3070 & my 3080 benches. However, I retested the old v2 unsanded benchmark a few times and didn't get much change from the last result. I included the best result from old benchmark for Single GPU. Unsanded-only because I don't have access to the sanded v2 now on this machine: 12809 vs 13342 now. Not much change. EDIT 12/16: Retested geekbench when I had more time and it does vary, so above result should be considered normal. AMD 5600XT Info NVIDIA RTX3080 12G Info (Updated Drivers Dec 05 2022)

-

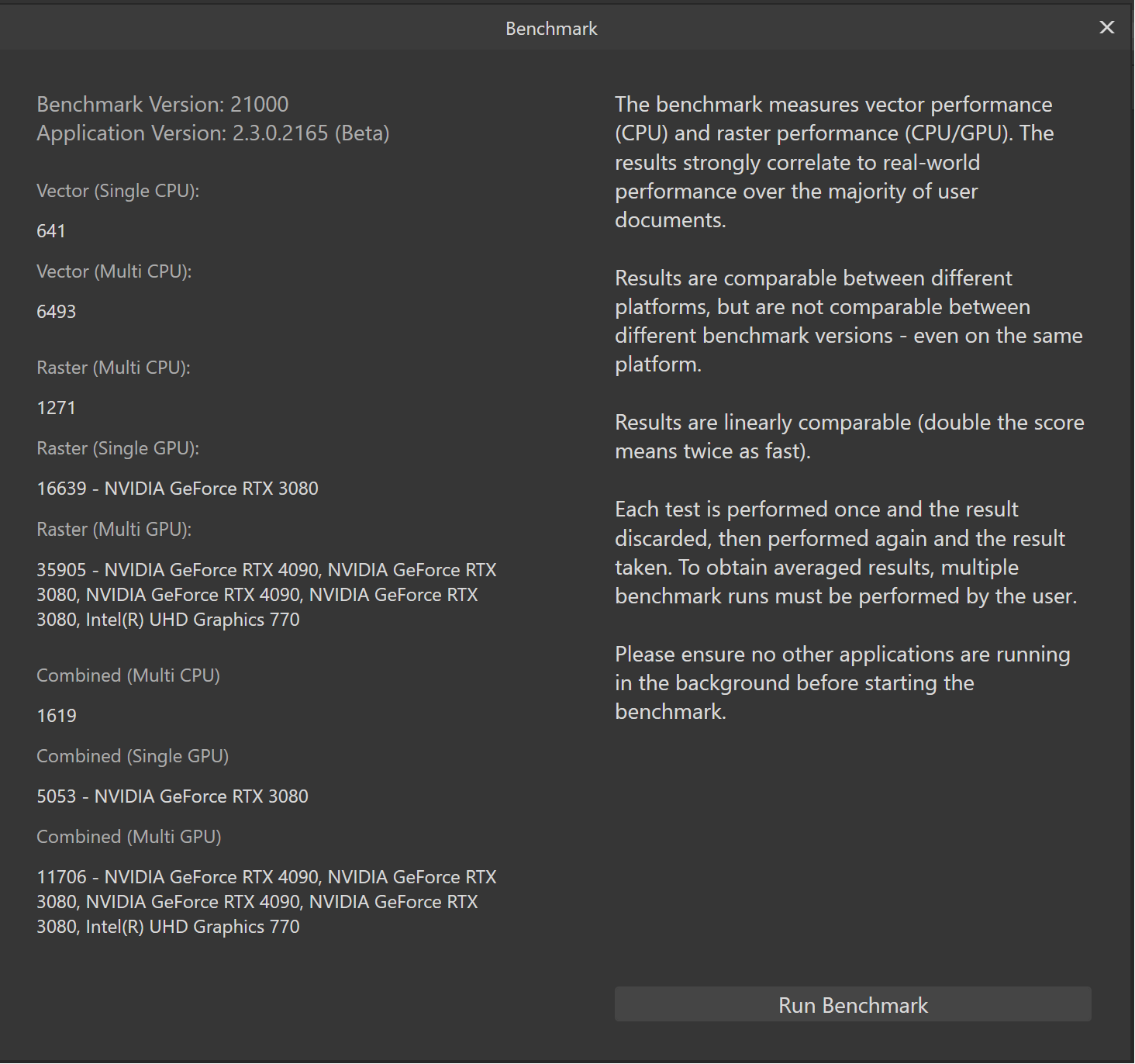

Can you please say more about this issue? I'm not able to run with OpenCL acceleration with either my RTX 4090 or my RTX 3080 on a fully patched Windows 11 Pro using the Nvidia 546.33 driver. I'm using the AP beta 2.3.0.2165 build. This same build with Windows 11 Pro runs fine on my laptop which has an RTX 3060 laptop GPU. The crash is happening inside nvvm64.dll at the bolded line below: Not Flagged > 15244 0 Worker Thread Win64 Thread nvvm64.dll!00007ffeafb81149 nvvm64.dll!00007ffeafb81149() nvvm64.dll!00007ffeafb7c23e() nvvm64.dll!00007ffeafb884b5() nvopencl64.dll!00007ffeb554cbb4() nvopencl64.dll!00007ffeb554ddea() nvopencl64.dll!00007ffeb552f788() libraster.dll!00007ffecc47a2a1() libraster.dll!00007ffecc47cde6() libraster.dll!00007ffecd6a3415() libraster.dll!00007ffecd6889e2() libraster.dll!00007ffecd774ee9() libpersona.dll!00007ffec8196408() libkernel.dll!00007ffedb1335b1() libkernel.dll!00007ffedb423231() libkernel.dll!00007ffedb42573b() ntdll.dll!TppWorkpExecuteCallback() ntdll.dll!TppWorkerThread() KERNEL32.dll!BaseThreadInitThunk() ntdll.dll!RtlUserThreadStart() The exception is: Unhandled exception at 0x00007FFEAFB81149 (nvvm64.dll) in 78a9f3cd-83b7-413a-b0bb-c117631567c2.dmp: 0xC0000005: Access violation reading location 0x0000000000000008. Pretty much anything I do in AP with an image will cause it to crash, sometimes loading, but always when I try to save an image or change a layer's opacity, for example. If I disable CUDA on both my cards, then AP will use the motherboard Intel GPU for acceleration without crashing. I am able to run the AP benchmark with CUDA on all cards turned on without it crashing, although the results are sometimes odd for the raster score. Any insight or workaround you can provide on this issue would be greatly appreciated.

-

AMD Radeon RX Hardware Acceleration

kkoukos replied to Mark Ingram's topic in V1 Bugs found on Windows

It is really nice that Serif has finally enabled OpenCL support for AMD GPUs on v.2.0. Please keep it that way !!! Through some testing i came to the conclusion that it really works. Lets look, not only the benchmark but also some real case performance. I am using the latest AMD Pro Driver 22.Q4 but even with Windows 11 default driver v.30 or v.31 it still works great. Actually Windows 11 store driver v.30 might be slightly better. When looking at the benchmark result, it is really low for the GPU tested (i have an RX 6900 XT), compared to the results at the thread below: When this GPU is used in Metal it is capable of a score of nearly 50000. When measured on Windows 11 i got only slightly more than 1000 (the result between versions are not directly comparable and is used just indicatively). So where does the difference of 50x comes from , and does it really matter? If you look at the picture below, i run the benchmark and in parallel i simply have the Windows performance monitor showing what's going on. The result in the graphs includes only the benchmark in it's full duration. A close look on the graphs, can clearly explain why the performance is so low. During the benchmark the CPU is continuously utilized at almost 100% during the entire duration, even when GPU execution is taking place. It seems to me that GPU performance is actually throttled by the CPU capability to JIT compile the OpenCL kernels. So, no matter what GPU you have RX 5500, or RX 5700 or RX 6900 the result will be pretty much the same, because it's anyway limited by the CPU ability to compile the kernels. Similarly if the CPU can compile and spawn kernels at a higher rate, most likely the GPU performance will increase. Now if we take a look at the GPU performance, we observe that the GPU is utilized at a peak of less than 15%. NVIDIA's implementation (and i believe the same applies to Apple's Metal) pre-caches compiled OpenCL kernels, and as a result the benchmark result is substantially higher just because the CPU doesn't need to recompile the kernels. But the most interesting question is does it really matter? To find out if the low benchmark result somewhat affects the user experience i stitched a large panorama, exported it on a large 16bit tiff and reloaded it (without layers or anything else); and started testing the live blur filters. To my surprise, the behavior of the application was excellent. Those filters that were implemented to use the GPU have been working really smooth, and flawless. Monitoring the GPU activity i verified that there was copy and compute activity. The compute activity was actually higher than in the benchmark but still resulted in device under-utilization, which is pretty normal considering that most likely i didn't have enough data to fully utilize the GPU. To conclude, i think it's really great that Serif has finally enabled OpenCL acceleration on AMD GPUs. The benchmark results might be on the low side but this i believe doesn't necessarily translate to bad user experience, as i believe the typical usage scenario when you apply an OpenCL filter is to spent at least a few seconds until you get the right result. And there is of course plenty of room for optimization for the developers (e.g., manual kernel pre-caching and compilation at startup, etc), although i don't think it's needed. The typical overhead of the driver (as i measured it using hello-world like code) is in the range of a few ms (50-70), so it should be completely unnoticed even if it happens at filter loading. So don't stick to the benchmark, test it out -

Poor export quality of PNG and JPG.

debraspicher replied to Designer1's topic in V2 Bugs found on Windows

@Bit Arts Lovely points and it was nice to read these anecdotes. So much to think about in one post. On the topic, I tend to work on high DPI illustrations that I sometimes translate to vector out of program... then export for laser cutters/assets for designers... and then output assets for websites. My images will tend to be very small scale... or rather large. I do also sometimes resize difficult images for a much smaller size and I will fill in/render the details back in by hand to fix any problem areas. I used to be able to do all of this in Photoshop, but have since learned with Affinity I need to do these touch ups in Clip Studio due to lack of a pressure-based opacity in the Brush tool. Edit: I point this out because I find that working both extremes of the scale can sometimes unearth different problems Anyway, I also use tones and patterned texture a lot in my designs and when comparing both programs, the disparity in AA curves rather shows up pretty clearly when exporting the same curves at small sizes. An example I created: Left: Illustrator Right: Affinity I post this example not to say whether I think one is worse or better. I post this to show that they are indeed a significant difference in terms of how AA/output is handled by both programs. I would say that Affinity's reads blurrier, as I've said before, because 1) it appears to utilize a linear AA "curve" which is effectively a subpixel black to transparent gradient that is more akin to "Outer Glow" or feathering and 2) thus the AA tends to be teeter out too fast compared to other implementations. Fonts that are not well hinted to begin with for screens at smaller point sizes or display type that has a lot of edge detail will be impacted the most. Of course it is distracting as well if we lay an image with heavy AA'ed type nearby a paragraph of text in a web browser rendered at high DPI... then the difference in quality will be much more apparent.. Other programs appear to apply a heavier weight to the edge details and this is perhaps because the AA/output curve is hand-tweaked by the designers of the program and thus gives a more "hinted" appearance (which increases clarity) on output, but also legibility for smaller points of type... the other thing to consider, how many fonts across the web were "tested" or even designed from within Adobe programs... therefore, their hinting algorithms/curves could be seen as something of a benchmark in some cases... Perhaps this could be helped if Coverage Map were fixed and/or we had an option to control AA at a application setting/document level, or just having access to specialized AA for text... I care for this greatly, because for web, little details like this go a long way push an icon or a site's logo to the next level. Of course there is always SVG, but that is not always an option, especially if the text has artwork embedded within it... Edit: Attached single samples: Edit: clarity -

I know it’s been a while, but I’ve had some serious struggles with this method (Tif from AP, ICC profile, etc). I’ve gone through all the steps, but no joy. Profiles come out super dark and reds are hot hot hot. I have no idea why this is happening. Have you run into similar issues? I just got the color checker to help make my colors more consistent (I’m colorblind and figured having a benchmark would help), but if I can’t use it, it’s definitely not worth the price tag!! please help!